Welcome back to the Lamb_OS Substack! As always, I am thankful to have my subscribers and reader stop by! If you are a regular reader of @Lamb_OS, then you – the readers – know you are the only reason I do this. So as always, thank you for visiting!

Let me begin by introducing myself as Dr. William A. Lambos. I call myself a computational neuroscientist, and I’ve been involved with the AI field (one way or another) since about 1970. As far as credentialing, you can see the footnote below if interested1. I write a lot about AI, but not exclusively. When addressing a topic, I take pains to assure my perspective is highly informed. I draw from many areas of study, and my conclusions, beliefs, and predictions often fall outside current or mainstream thinking. But these same beliefs are grounded by 50 years of study and rigorous cross training in multiple fields of study. See my previous screeds on this Substack to learn more, and judge for yourself.

Please Subscribe today if you have not already yet done so. This Substack is free! So, please subscribe for whatever reason might appeal to you. But I’d hope you do so for the value it offers.

I am blessed to live in two professional worlds: clinical neuropsychology, and data science (also called analytics). It should be of little surprise, then, that almost all my posts here in Lamb_OS are about AI and (the utter folly and fool’s errand called) AGI. Also, since my regular readership grows ‘organically’, my impression is that 80% or more of you already know my positions on AI/AGI in their many incarnations.

For a quick summary, I don’t dislike what any computer code does, as long as it’s been vetted for safety (and we would hope accuracy, as relevant). If you want to call a deep learning model “Artificial Intelligence”, and this makes you happy, I get it. But you have to understand that using that term, you are putting yourself and/or others at risk of becoming delusional. It implies that the model is manipulating information as a human brain might do so; worse, by induction, therefore any and all things done by human brains can be implemented in silicon based computer code. Which, as we know is “The AGI Fallacy.”

For the purposes of this post, my core tenet (and prediction) is simple: The quest for ‘AGI’ — which does not exist and likely never will — is best characterized as a ‘zombie’ mind virus. It’s like a love potion that makes petting a black widow spider an utterly irresistible desire (that never ends well). Once you start to believe that AGI will emerge from whatever the “AI Tech du jour” is (GPTs in this go-round), the AGI Fallacy predicts three outcomes:

A manic, febrile, and hysterical lust for code, money and power emerges that engulfs most everyone in its wake. It will touch and consume most of those in the field, much like the Dutch Tulip Mania did the residents of Holland in the early 1600s.

No AGI will appear.

The field will collapse, unfathomable wealth will be lost, and the next AI Winter will follow.

This has been the history of this field since the 1950s, and this iteration will be no different. Why not?

‘AGI’ Cannot Be Attained By Mapping Inputs to Outputs

Human intelligence is currently found only in living people. And living organisms are all about one thing: Remaining alive. At the cellular level. Everything we humans are capable of, for better or worse, depends upon the cellular substrate and associated DNA to happen. Each cell (and type of cell), interacting with an uncountable number and variety of other cells (and cell types), enables the “intelligence” we seek to emerge from a unique combination of genetic and environmental (epigenetic) factors. Our DNA determines the “lowest level” approach-avoidance decisions when engaging with the environment. It is the start of choosing appropriate emotions for everything we encounter in the world, and it has a say in each human’s behavioral choices, experienced emotions and the importance of everything else “out there” (called its salience). This arrangement creates Agency, which is the foundation of the broad and generalized adaptive functioning we call “intelligence.”

But we’re digressing. Let’s address the coming GPT-Pocalypse, and the manic lust for money, celebrity and power that accompanies the march to AGI, delusional or otherwise, a little later.

You see, AGI is a very indefinite construct. What, specifically, are its proposed properties and abilities? The truth, it turns out, is that nobody knows — specifically:

“I’ve seen many different definitions of AGI,” said Melanie Mitchell, a professor at the Santa Fe Institute and author of the book Artificial Intelligence: A Guide for Thinking Humans. “None of them are rigorous.”

This isn’t because people disagree on some central hypothetical question specific to AGI. It’s because we - psychologists and neuroscientists - simply can’t agree how to define human intelligence. And trust me on this, we have tried.

There are well over a dozen historical and current conceptualizations of (human) intelligence in the literature. A convenient summary of the twenty or so proposed ideas — the conceptual approach — can be found here.

The underlying theory of TOIs is that there is some (perhaps gaseous?) property shared not only within the various abilities of a given individual, but across every (neurotypical) member of our species, that imbues us with “intelligence”. Charles Spearman, who developed the statistical technique of Factor Analysis proposed a factor he supposed underlies all general human intelligence, which he called “G-factor”, and labeled with the statistic Rho. But does “G” even exist? Is it mapped to the brain in any way? According to one of the most esteemed living neuropsychologists, maybe not:

“…what are the brain structures whose individual variations determine these global traits? This question is directly related to the search for general intelligence—the “G factor”—and for measures of it, which are outside the scope of this book. The issue remains a matter of heated scientific debate. The last few decades have witnessed a departure from the notion of a single G factor in favor of “multiple intelligences.”

Excerpt From The New Executive Brain, Elkhonon Goldberg

https://books.apple.com/us/book/the-new-executive-brain/id806803082

This material may be protected by copyright.

Another problem no one talks about in cognitive neuroscience is that since defining intelligence has proved implausibly difficult, most theorists have concerned themselves instead with measuring intelligence. One could be forgiven for assuming that a construct be defined before attempting to measure it, but not in psychology! In fact, ‘researchers’ in assessing the many components of intelligence have been much more motivated in creating — and selling — tests of intelligence (“TOIs”), than in elucidating the construct. And why not? It’s a very lucrative business! (It is also one with a somewhat sordid history of attempts at social engineering).

In short, none of the theoretical approaches to defining or measuring ‘intelligence’ is sufficient to account for even a fraction of the known domains of cognitive functioning, emotional regulation, or knowledge representation known to be available to people.

Fortunately, the field of neuropsychology closely associates both brain areas and networks with the ability to perform and express experience, and is therefore among the fields best suited to offer theories of intelligence. The sources of information are very myriad: structural and functional neuroimaging, the effects of brain insults on performance, TOIs, correlational studies, hefty statistical tools for measuring weak but persistent correlations in multivariate data, the impacts of drugs and medications on the brain and on functioning, knowledge from DNA analyses, and many, many more. Neuropsychology tells us:

The concept of ‘IQ’, a single number that neatly summarizes one’s intelligence, is flat out nonsense.

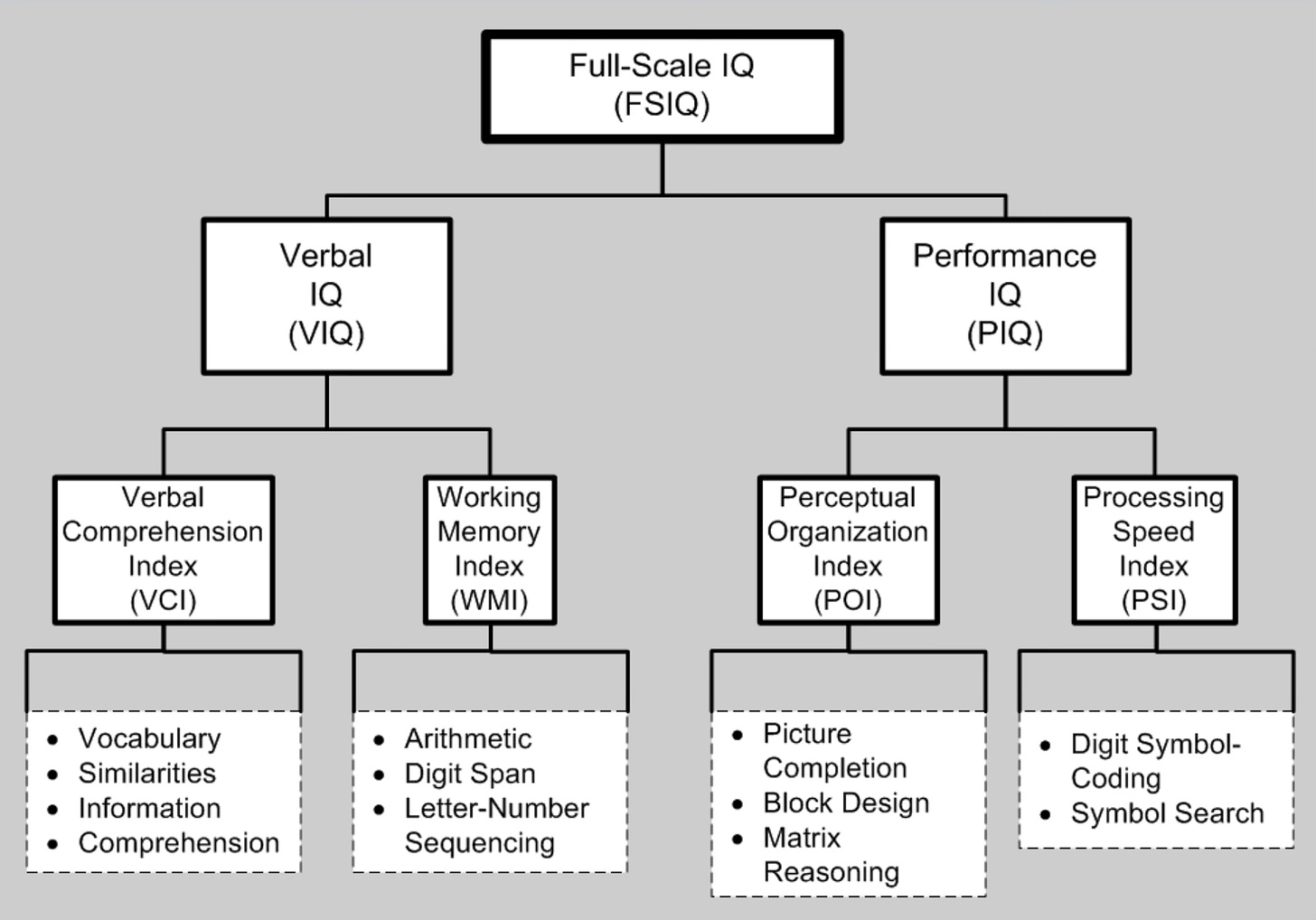

The most widely used TOI in the US and England is the Wechsler Adult Intelligence Scale. It gives on “full-scale” IQ score (FSIQ), Verbal and Performance IQ, and so forth, as depicted below:

It is not “great stuff.” It is basically “made up.” As my daughter (now a psychologist herself) used to say when she was in her teens, “As IF!” The individual tests show far more cross-correlation than would be expected if the tests assessed skills that were independent. The baseline data are suspect, as are their underlying distributions, and all scores are “converted” to the normal (gaussian) distribution, without justification. Finally (for here), the test is entirely over reliant on language, the norms are based on US and Western European base scores, and are highly culturally biased.

Neuropsychological assessments, as noted above, are often offered as measuring some aspect of intelligence. These assess ability (based on performance and/or knowledge) in dozens of different domains:

Language

Memory

Attention

Visuospatial Reasoning

Somatosensory Representation

Executive Functioning (Prefrontal cortices):

Planning, Organization and Judgement

Self-control, understanding consequences, theory of mind

Concepts and constructs (language-based and otherwise)

Pattern recognition

Analogies and metaphors

Irony and sarcasm

Emotional functioning and flexibility

Creativity

Flexibility / Context sensitivity

Music and art fluency

… many more — this is just the start

An individual’s TOI scores over time and across tasks are positively correlated, but not to the extent one might think. The R^2 value of one individual’s scores over repeated tests (using alternate forms of the test as needed) is barely over 0.35. So two-thirds of the variance in separate test scores is unexplained.

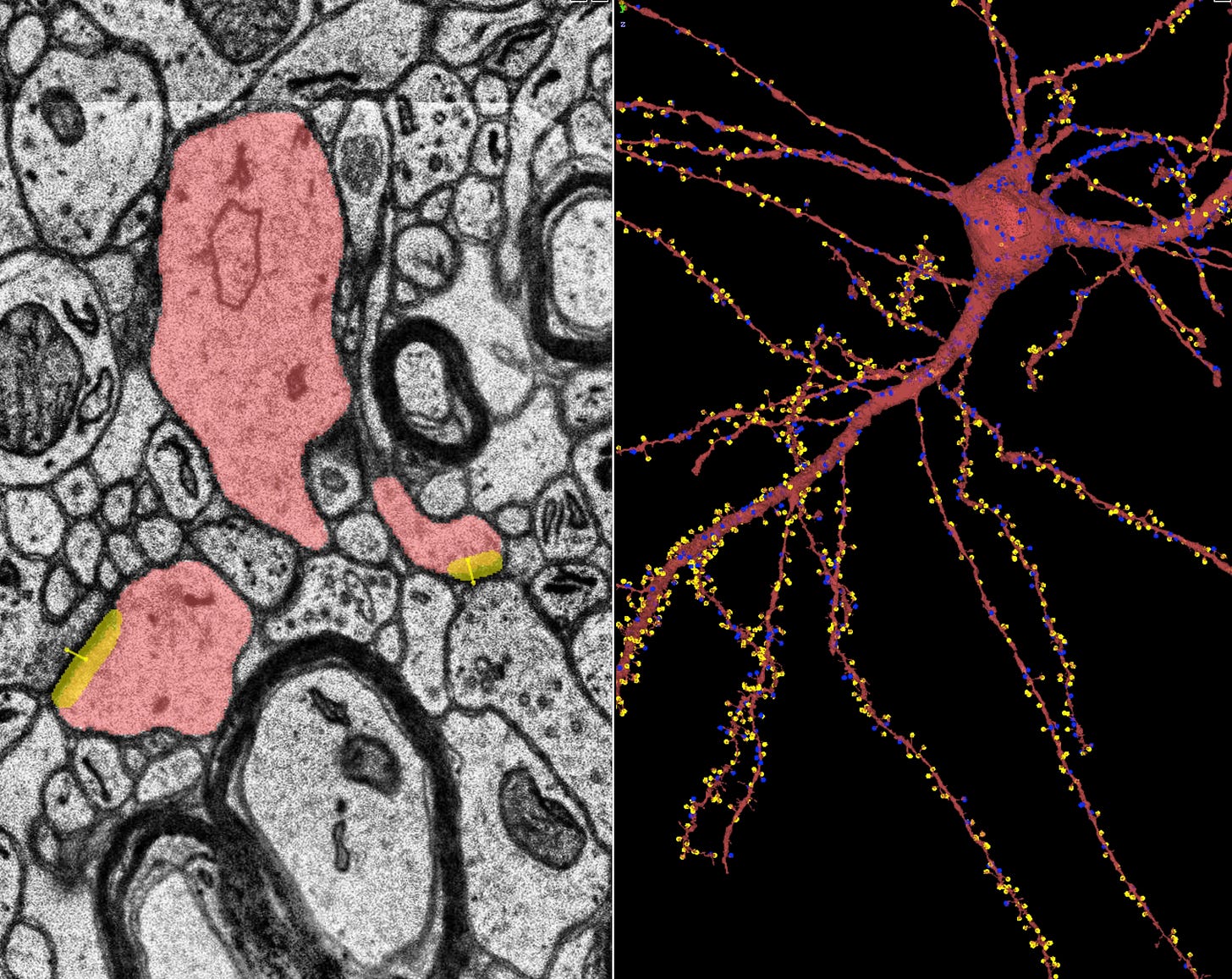

The relationship of domains of Intelligence and the function and structure of the human brain are compelling, but more than two-thirds of the abilities are not localized cytoarchitecturally. Rather, they arise from dynamic functional networks in the brain. Nearly every one of the proclivities listed above assessed by TOIs involve brain networks which include both cortical and subcortical (or even brainstem) areas, and the symphony of neural music proceeds almost constantly.

Whatever AI engineers expect “AGI” models to do, it cannot be attained by scaling stochastic transformer models (GPTs and such). As such, what GPTs do has nothing whatsoever to do with humans recognize as intelligence. Intelligence implies self-regulation, intent and agency, sensitivity to consequences enabling adaptation and emotional flexibility, broad models of the world with implicit causality (or at least Newtonian mechanics), an emotional connectedness to other humans, and much else.

Each of the above clusters of abilities are foundational for any agent said to be capable of AGI. If you want to build an “AGI” capable model, than your current tech must include a means to instate — computationally — everything on the list above (and more, in truth). If that current tech works like a GPT — irrespective of parameter size — then there will be no AGI.

To return to GPTs and AI, I remain firm in my prediction that the march to AGI will assure the collapse of AI corporate equity values and market caps, while driving down much of the rest of the tech sector for a little while. Foundational wealth shall evaporate, and anybody who even reminds you of Sam Altman, Elon, or Zuck will feel like Frankenstein’s creature running from villagers with pitchforks and torches. This is why every new round of tech that calls itself ‘AI’ invariably leads to self-immolation.

I knew by Thanksgiving of 2023 that the whole GPT movement was screwed, starting with the OpenAI / Altman dumpster fire. The floodgates had opened and the white hot mania erupted like lava from a Hawaiian volcano. Sure enough, it became immediately apparent (at least to me) that GPTs were not only doomed, but that the losses in money and power, and the damage to reputations and from copyright lawsuits would rapidly lead to a new AI Winter. Since then I predicted that any advances would quickly get waylaid — i.e., collapse — as is currently happening. All because of the absolute necessity of attaining AGI — presumably, abilities that equal or exceed the capabilities of people. Which abilities? Whose abilities? Exceed how, exactly?

As my regular readers know, I was confident from the get-go that transformer-based deep learning models would lead to the frenzied mania we are seeing. Based on articles seemingly everywhere — The Information, Bloomberg, Wired, The WSJ and NYT, Goldman Sachs…even Gary Marcus! — it is finally collapsing like a star going nova, and thus far at least, my predictions are playing out. GPTs have probably reached maximum scale due to lack of new exemplars on which to train. Where is GPT-5? Transformer models are already disappointing everyone but the Zombie Masters. Soon, everybody involved will go broke, or worse. A lot of people get hurt.

As far as watching GenAI devour itself, I’m truly not happy about what’s happening, and the worse is yet to come. But really, the leaders and engineers should have known better. After Blake Lemoine at Google decided his LaMDA GPT chatbot was sentient, there should perhaps have been a public reckoning that computer engineers are not assured to be appropriate subjects for taking the Turing Test.

Maybe they’re not intelligent enough to know better?

Thanks for stopping by, and see you again soon!

Bill

I hold a postdoctoral certification in clinical neuropsychology and a license to practice in California and Florida. I’ve been coding since mainframes were the only accessible computers and LISP was the ‘lingua franca’ of AI (ca. 1970-81), but when the Zilog microprocessors appeared (anyone remember the Z-80?), I learned to code in machine language (‘assembler code’). Finally, I hold Masters degrees — one quite recent — in computation and data science.